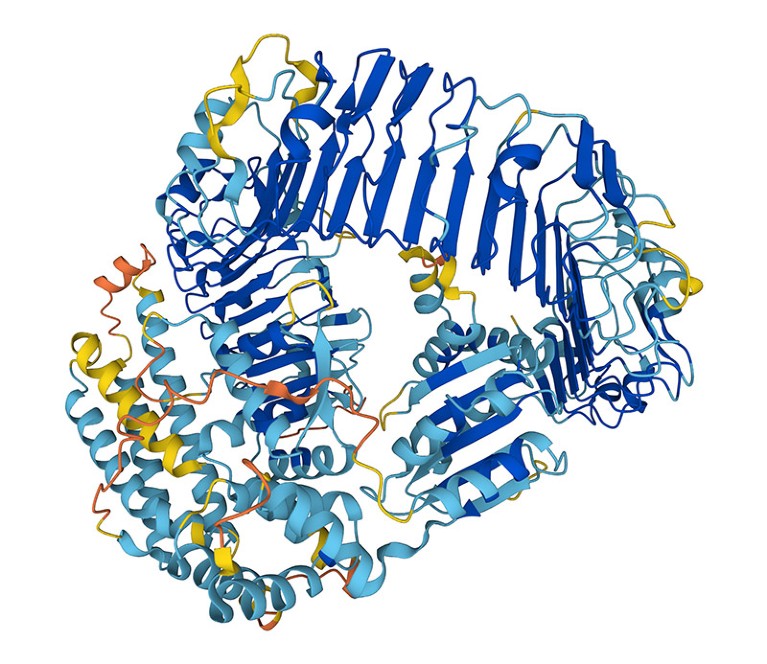

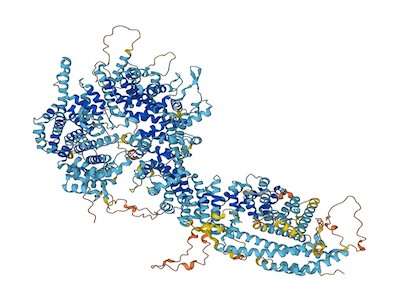

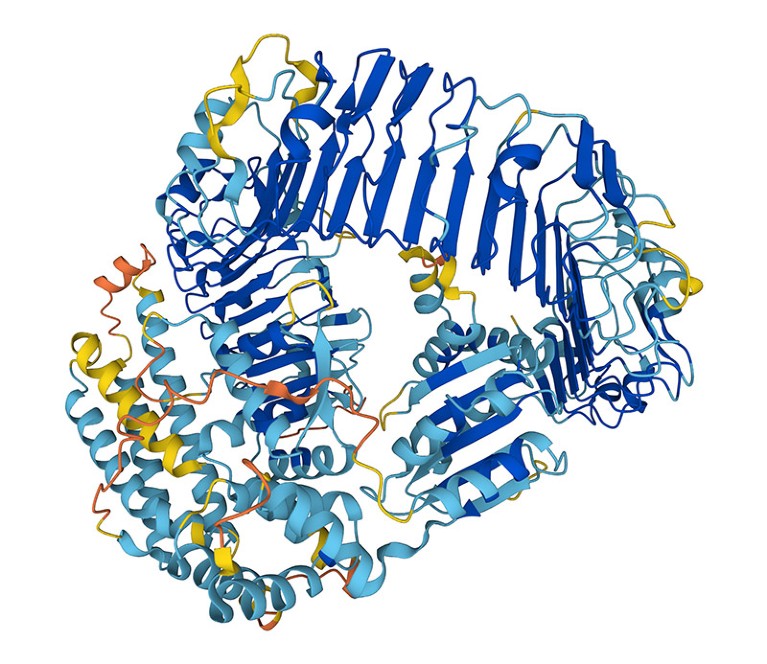

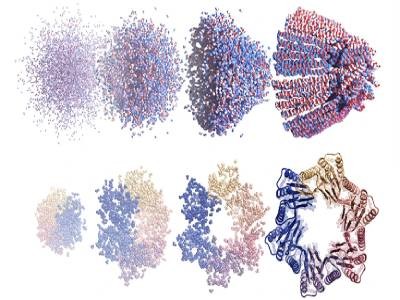

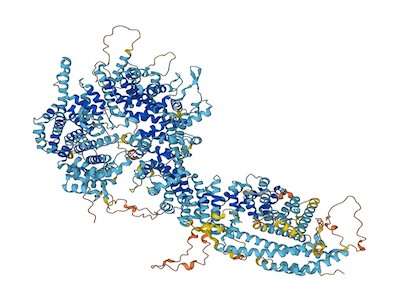

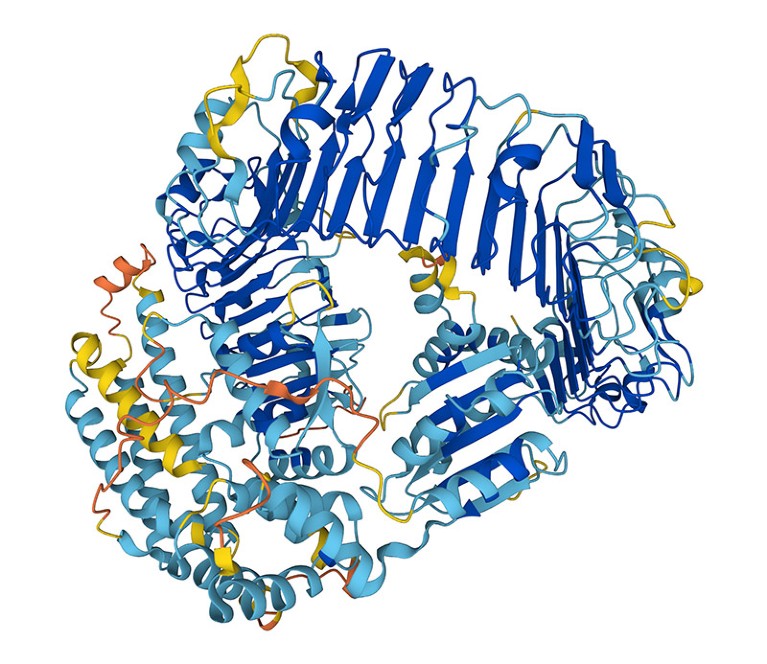

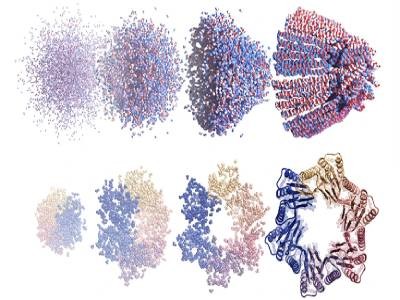

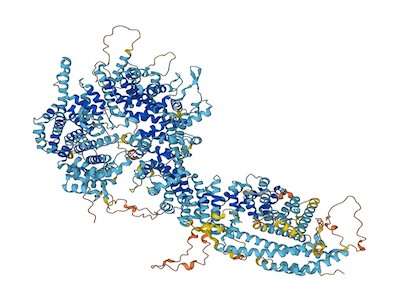

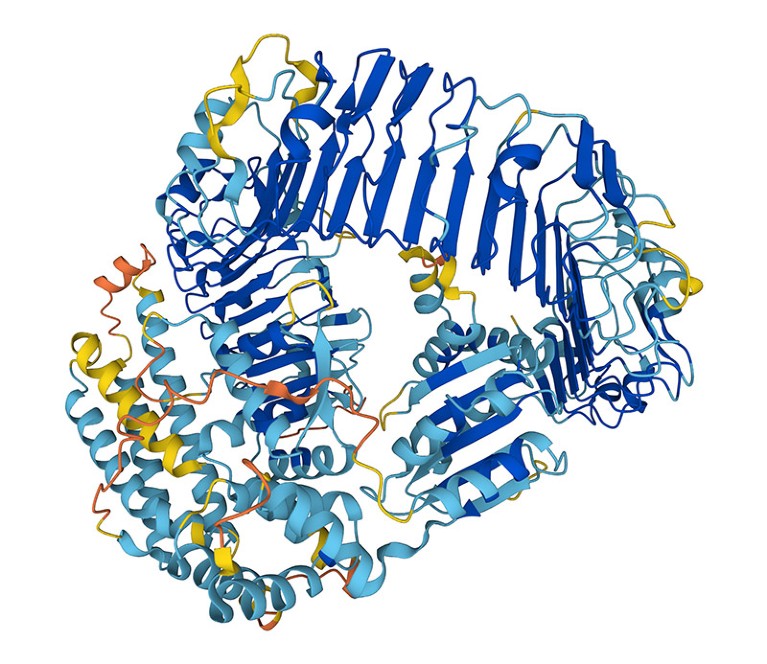

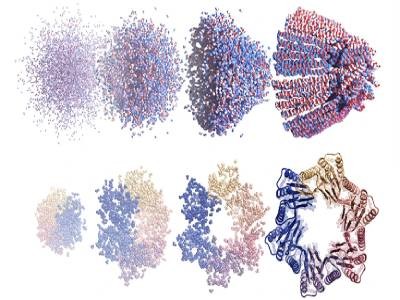

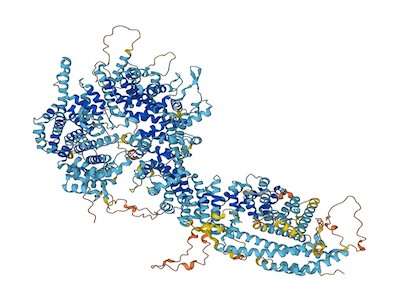

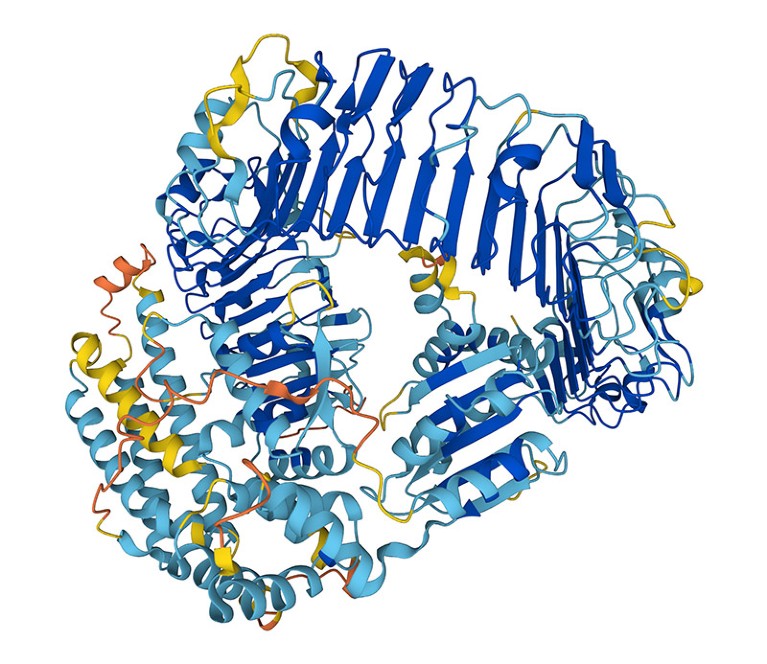

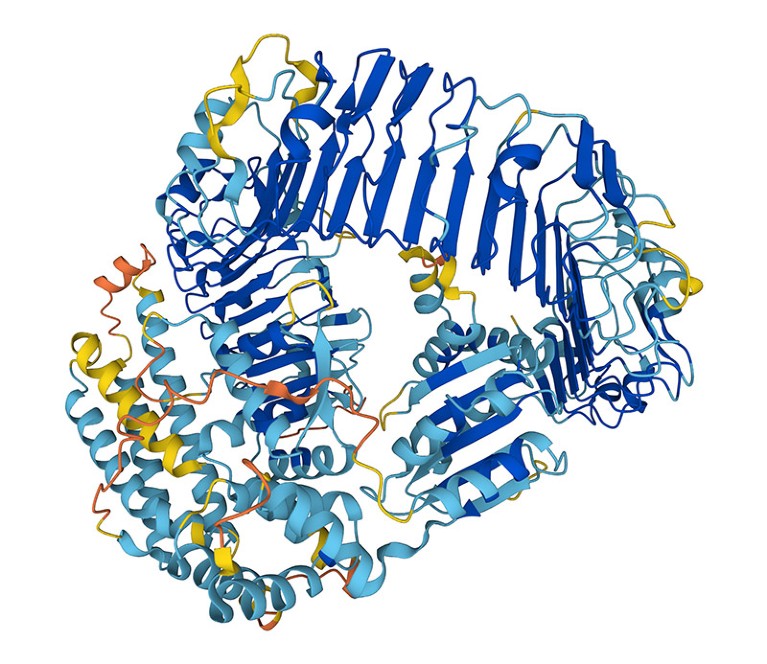

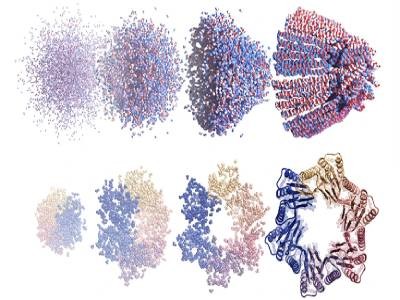

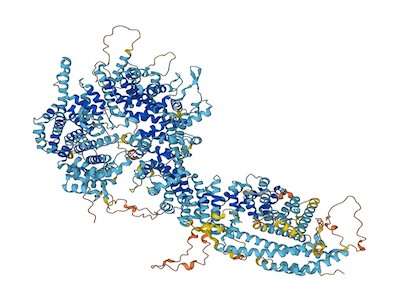

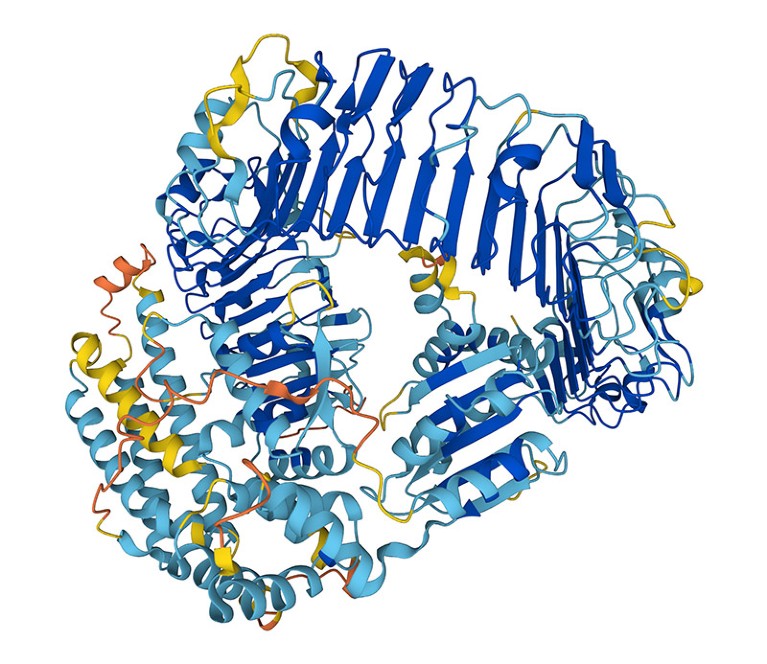

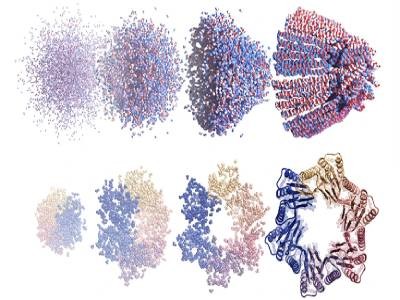

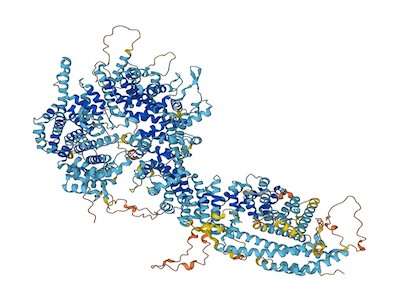

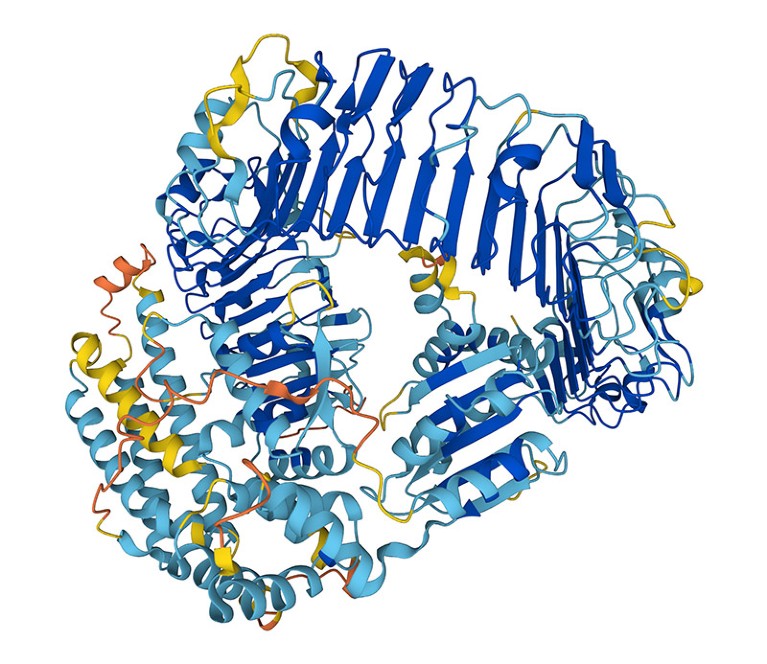

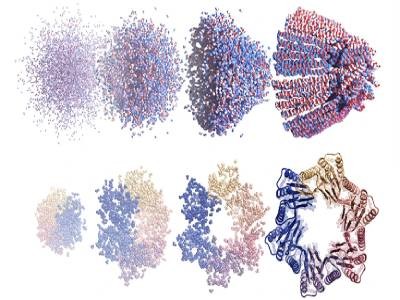

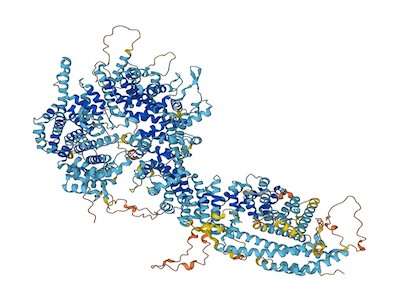

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”

The factitious-intelligence device AlphaFold can design proteins to carry out particular features.Credit score: Google DeepMind/EMBL-EBI (CC-BY-4.0)

Might proteins designed by synthetic intelligence (AI) ever be used as bioweapons? Within the hope of heading off this risk — in addition to the prospect of burdensome authorities regulation — researchers in the present day launched an initiative calling for the protected and moral use of protein design.

“The potential advantages of protein design [AI] far exceed the hazards at this level,” says David Baker, a computational biophysicist on the College of Washington in Seattle, who’s a part of the voluntary initiative. Dozens of different scientists making use of AI to organic design have signed the initiative’s checklist of commitments.

AI instruments are designing solely new proteins that would rework medication

“It’s an excellent begin. I’ll be signing it,” says Mark Dybul, a worldwide well being coverage specialist at Georgetown College in Washington DC who led a 2023 report on AI and biosecurity for the suppose tank Helena in Los Angeles, California. However he additionally thinks that “we’d like authorities motion and guidelines, and never simply voluntary steerage”.

The initiative comes on the heels of experiences from US Congress, suppose tanks and different organizations exploring the chance that AI instruments — starting from protein-structure prediction networks resembling AlphaFold to massive language fashions such because the one which powers ChatGPT — may make it simpler to develop organic weapons, together with new toxins or extremely transmissible viruses.

Designer-protein risks

Researchers, together with Baker and his colleagues, have been attempting to design and make new proteins for many years. However their capability to take action has exploded lately due to advances in AI. Endeavours that after took years or had been unimaginable — resembling designing a protein that binds to a specified molecule — can now be achieved in minutes. Many of the AI instruments that scientists have developed to allow this are freely accessible.

To take inventory of the potential for malevolent use of designer proteins, Baker’s Institute of Protein Design on the College of Washington hosted an AI security summit in October 2023. “The query was: how, if in any means, ought to protein design be regulated and what, if any, are the hazards?” says Baker.

AlphaFold touted as subsequent massive factor for drug discovery — however is it?

The initiative that he and dozens of different scientists in america, Europe and Asia are rolling out in the present day calls on the biodesign group to police itself. This contains frequently reviewing the capabilities of AI instruments and monitoring analysis practices. Baker wish to see his discipline set up an knowledgeable committee to evaluation software program earlier than it’s made extensively accessible and to suggest ‘guardrails’ if needed.

The initiative additionally requires improved screening of DNA synthesis, a key step in translating AI-designed proteins into precise molecules. Presently, many firms offering this service are signed as much as an business group, the Worldwide Gene Synthesis Consortium (IGSC), that requires them to display orders to establish dangerous molecules resembling toxins or pathogens.

“One of the simplest ways of defending in opposition to AI-generated threats is to have AI fashions that may detect these threats,” says James Diggans, head of biosecurity at Twist Bioscience, a DNA-synthesis firm in South San Francisco, California, and chair of the IGSC.

Danger evaluation

Governments are additionally grappling with the biosecurity dangers posed by AI. In October 2023, US President Joe Biden signed an govt order calling for an evaluation of such dangers and elevating the potential of requiring DNA-synthesis screening for federally funded analysis.

Baker hopes that authorities regulation isn’t within the discipline’s future — he says it may restrict the event of medicine, vaccines and supplies that AI-designed proteins may yield. Diggans provides that it’s unclear how protein-design instruments may very well be regulated, due to the speedy tempo of growth. “It’s exhausting to think about regulation that will be acceptable one week and nonetheless be acceptable the following.”

However David Relman, a microbiologist at Stanford College in California, says that scientist-led efforts will not be ample to make sure the protected use of AI. “Pure scientists alone can not signify the pursuits of the bigger public.”