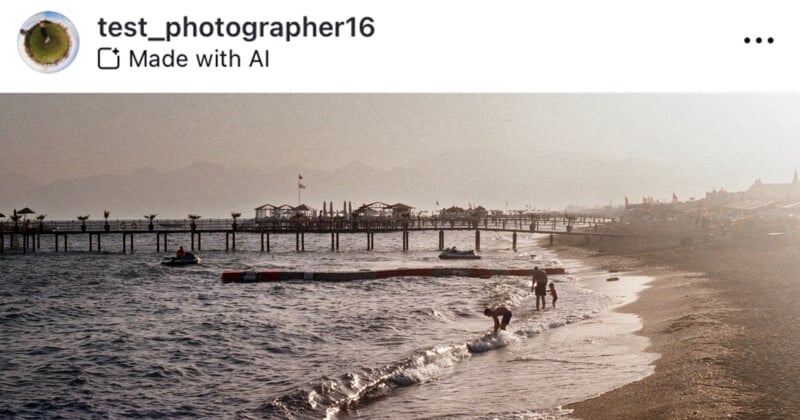

Just lately, Instagram has been rolling out its “Made with AI” tag which ostensibly flags AI-generated photographs on the platform but it surely has been enraging photographers after flagging images that aren’t AI-generated.

Instagram has revealed little or no about the way it detects AI content material saying solely it makes use of “industry-standard indicators.” With this in thoughts, PetaPixel tried to search out all the particular methods the “Made with AI” sticker will probably be hooked up to an Instagram previous.

Which Photoshop Instruments Will Set off the ‘Made With AI’ Label on Instagram?

Generative Fill

Maybe one of many largest bug-bearers for photographers is that making a minor adjustment to a picture utilizing an AI-powered device on Photoshop leads to the publish being given a “Made with AI” label.

Similar to in my earlier exams, when eradicating a speck of mud on Photoshop utilizing Adobe’s Generative Fill device, Instagram provides the photograph a “Made with AI” tag as soon as it’s uploaded. This occurs regardless of the very fact I may have achieved the very same outcome with the Spot Therapeutic Brush Instruments or Content material-Conscious Fill which doesn’t set off the AI label.

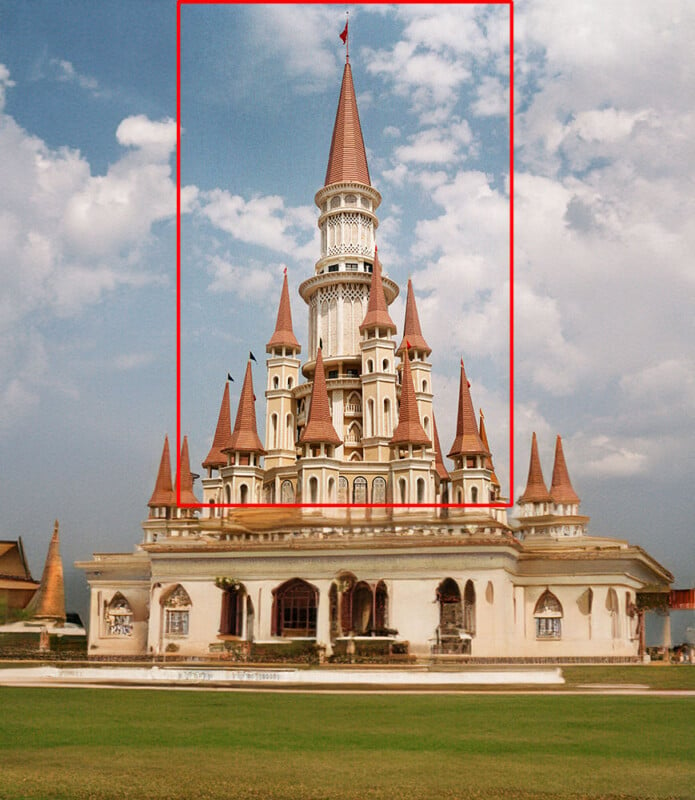

After all, you may also make massive alterations to a photograph utilizing Generative Fill similar to under the place I used the Reference Picture characteristic which lets you add a reference photograph to information Generative Fill. That additionally triggers the marker.

Generative Broaden

Generative Broaden, a device that seems as an possibility when cropping out from a picture, is powered by the identical AI know-how as Generative Fill so it’s maybe no shock that utilizing this device will even set off a “Made with Label.”

Notable Exceptions in Adobe Lightroom and Photoshop

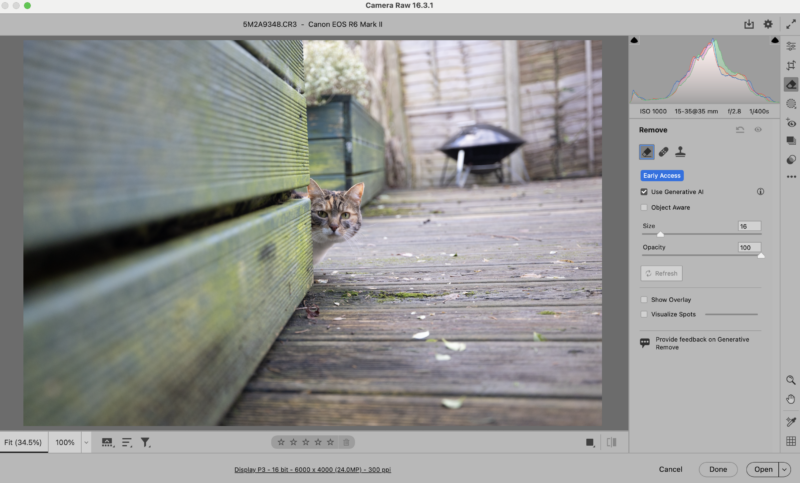

Generative Take away Device

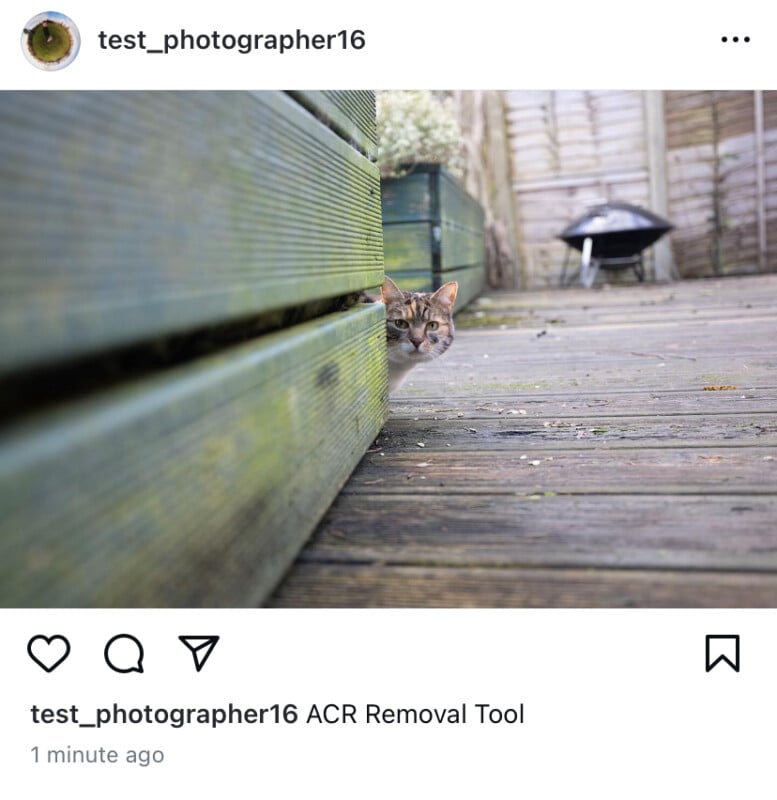

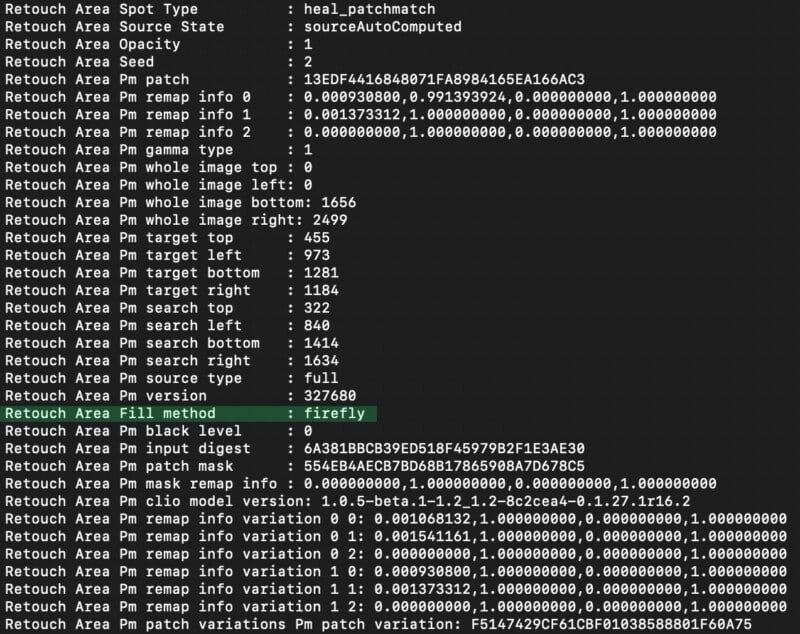

There’s a new Generative Take away device in Adobe Lightroom and Adobe Digital camera Uncooked (ACR), and regardless of it utilizing generative AI know-how, it doesn’t journey Instagram’s “Made with AI” label.

Generative Take away is without doubt one of the latest and most spectacular AI-powered options in Adobe Lightroom and Photoshop (by way of Adobe Digital camera Uncooked). Presently in early entry (beta), Generative Take away makes use of Adobe Firefly know-how to take away a brushed object from a picture and substitute it with new pixels that match the remainder of the picture.

Whereas the outcomes aren’t all the time a lot completely different than one thing just like the Spot Therapeutic device, Generative Take away is far more dependable and complex primarily based on preliminary testing.

Regardless of utilizing generative AI know-how, a picture edited with Generative Take away, as a lot a Firefly device as some other, doesn’t get flagged as “Made with AI” in Instagram. Why?

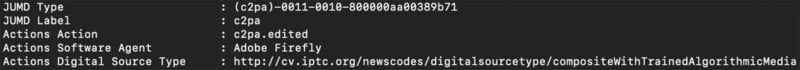

PetaPixel contacted Adobe for remark however digging by way of picture metadata uncovers a possible clarification. Not like a picture edited utilizing Generative Fill, a photograph edited with Generative Take away doesn’t get tagged with any C2PA info. C2PA knowledge is significant to Adobe’s Content material Authenticity Initiative — a rising group that Meta is notably absent from — as a result of it permits software program instruments to know when a picture has been edited.

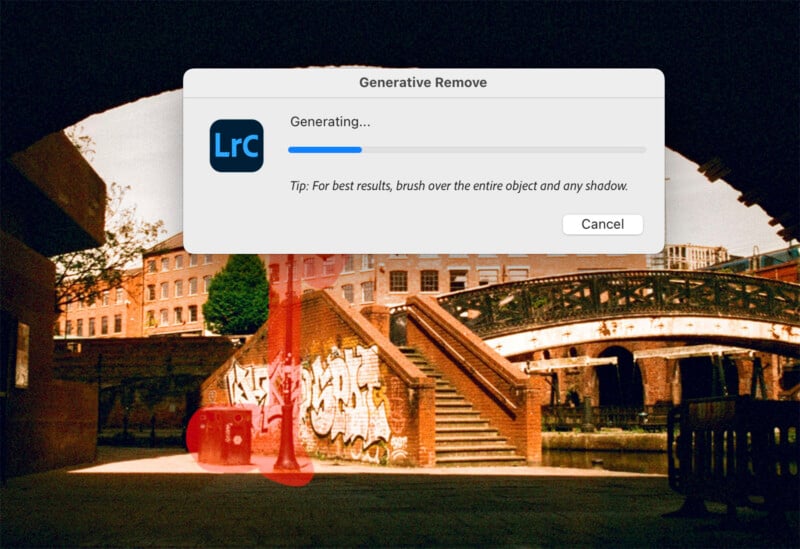

Whereas there’s a “Firefly” tag in a file edited in Lightroom utilizing Generative Take away contained in the “Retouch Space Fill technique” area, a photograph with Generative Fill utilized has much more code in its metadata. Within the JUMD Sort and JUMD Label fields, there are C2PA flags, plus an express point out of “Adobe Firefly.” There are additionally C2PA declare generator flags and a C2PA signature within the metadata.

No matter why Generative Take away doesn’t instigate C2PA flagging, at the least not but, the inconsistencies underlie a part of the problem with Meta’s “Made with AI” label. It’s completely slapdash in its execution and does nothing substantive to cut back the potential hurt attributable to AI-generated and edited images.

Different Instruments in Photoshop

In PetaPixel’s exams, the next instruments additionally didn’t set off Instagram’s AI label: Neural Filters, Sky Substitute, AI-powered noise discount, and Tremendous Decision. It’s value noting that these options use Adobe Sensei AI know-how, not Firefly. Then once more, Meta’s tag isn’t “Made with Firefly,” it’s “Made with AI.”

Do AI-Generated Photographs Set off the ‘Made With AI’ Label on Instagram?

DALL-E

OpenAI’s DALL-E picture generator is a well-liked alternative, and it’ll set off Meta’s “Made with AI” label.

Adobe Firefly

Given how some Firefly-powered options end in Meta’s “Made with AI” tag showing on Instagram, it ought to come as little shock that a picture outright generated utilizing Firefly comes with an AI content material warning.

Steady Diffusion

That stated, not all AI picture mills trigger the “Made with AI” tag to seem. Customers can create photographs in Steady Diffusion, export them from the platform, and add them to Instagram with none considerations about an AI label.

Meta’s AI Picture Generator

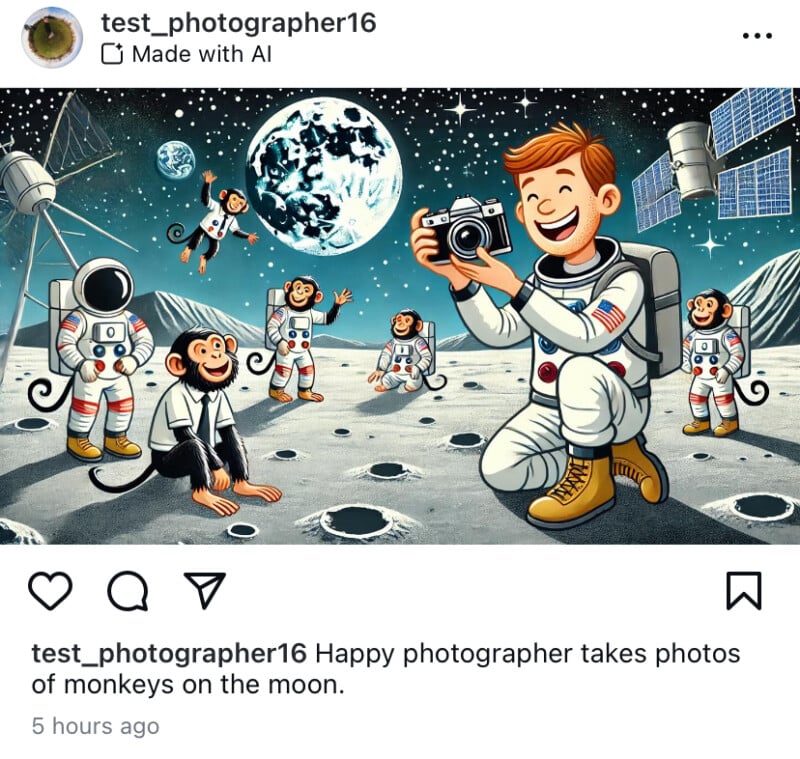

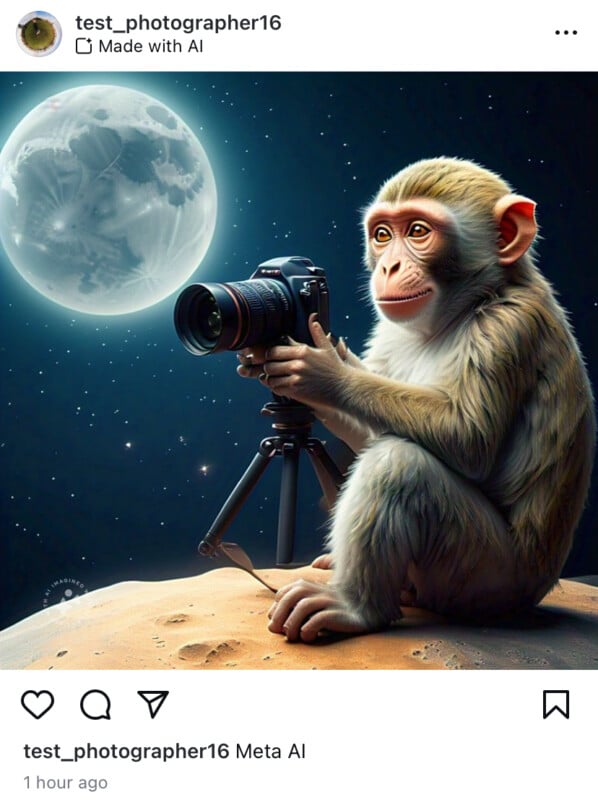

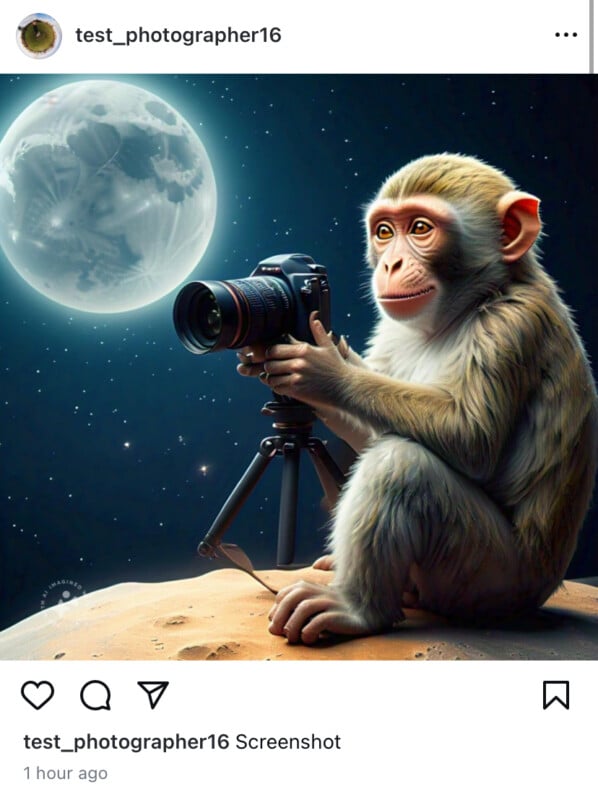

Instagram’s guardian firm Meta has its personal AI picture generator which we cheekily used to see if Meta will flag its personal AI photographs.

And whereas it does flag, a easy screenshot can bypass the label — regardless of Meta’s AI watermark nonetheless being seen on the picture itself.

What Does Instagram’s ‘Made with AI’ Label Really Obtain?

Contemplating how straightforward it’s to avoid Meta’s AI-detection system — if you understand how to edit images, you’ll be able to bypass Meta’s present checks — it begs the query, “What’s the purpose?” Up to now, Instagram’s “Made with AI” label has managed to incorrectly label some actual, very-much-not-AI images, sullying the popularity of revered photographers, and utterly miss images that weren’t solely really edited with AI, however not even executed so in a means designed to camouflage using generative AI in any respect.

Whereas the necessity for some type of content material authenticity is actual, and rising ever extra important by the day, Meta’s strategy is hamfisted. All indications level to a easy metadata scrape, which isn’t solely straightforward to deceive, however unreliable relying on the software program and particular person instruments an individual makes use of to create a picture.

Arguably, a C2PA-based strategy makes a ton of sense, however Meta has all of it backward. As a substitute of in search of proof that a picture has been edited utilizing generative AI, maybe Instagram ought to search for proof that a picture hasn’t been edited, and people photographs can get a label that verifies them as genuine. Any such know-how already exists, and the Content material Authenticity Initiative is difficult at work creating methods to make it extra accessible. If solely Meta needed to play ball.

As a substitute, photographers have to fret about whether or not their legit photographs will probably be mislabeled. There’s little question that within the age of AI, it’s exhausting to belief what we see on-line. Even completely legit photographs have come below undue scrutiny, lengthy earlier than “Made with AI” labels began showing on Instagram.

It’s a giant drawback that requires a considerate resolution, and the present iteration of “Made with AI” labels on Instagram is unquestionably not it.

A Continually Shifting Goal

We’ve got endeavored to search out each photograph modifying device that may set off a “Made with AI” label however there could also be extra and we’ll replace this text if and once we discover any. There may be additionally important probability that Meta’s seemingly rudimentary and fundamental AI-detection know-how will bear continuous adjustments, which means that some options that don’t at present set off a “Made with AI” label might sooner or later.

Further reporting by Jeremy Grey.